Chapter 5 Collaboration and Trust

‘[M]ethod’ is not what matters most among companion species; ‘communication’ across irreducible difference is what matters. Situated partial connection is what matters. … Respect is the name of the game (Haraway, 2003, p. 49).

‘[M]ethod’ is not what matters most among companion species; ‘communication’ across irreducible difference is what matters. Situated partial connection is what matters. … Respect is the name of the game (Haraway, 2003, p. 49).

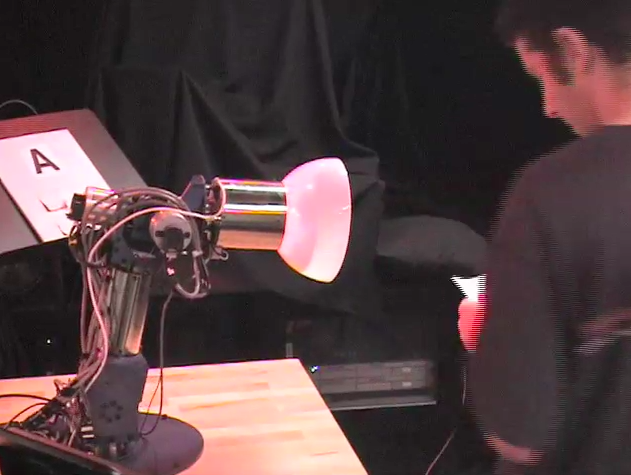

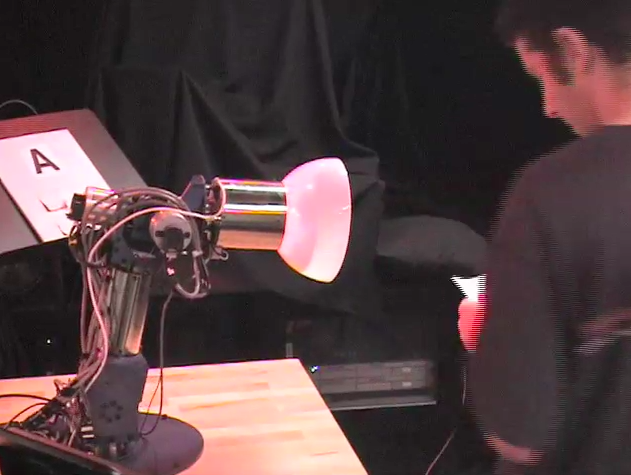

In order to take things a bit further, to consider whether and how humans and robots can work together, this chapter considers Guy Hoffman’s creation, AUR, the robotic desk lamp. AUR was created to find out how flexible relations between humans and robots might support better interactions, in particular when they are working together to complete a task. Some key attributes of the relation between human and robot that supports such interaction are summed up in Haraway’s quotation. Communication between humans and AUR definitely takes place across an overtly presented level of “irreducible difference”, and that difference is what allows the partnership to work at all (given that the human directs the movement of the lamp, but relies upon the lamp to turn and shine the right coloured light on cue). Human and robot are in a state of “situated partial connection”, as they work together to complete a repetitive task that both begin to learn about as the iterations of the experiment build up over time. Finally, AUR follows the human’s instructions (depicting a respect for these, although it is impossible to say that this robot ‘respects’ the human exactly), but nonetheless communicates its own understanding of the task when misdirected. The double-take of the lamp (as its ‘head’ moves the way it ‘thinks’ it should go, but it turns back to the human as if to question the misdirection) causes the human to reconsider, and issue the correct instruction (in line with the lamp’s ‘understanding’ of the situation). This, alongside the comments of those taking part in the experiments with AUR, demonstrates how human participants develop a respect for the robot and its ability to learn the task, a vital part of completing the task correctly.

Haraway, D. (2003) The companion species manifesto: dogs, people, and significant otherness. Chicago: Prickly Paradigm Press.

![]() We have to see the different maps as answering different kinds of question, questions which arise from different angles in different contexts. … The plurality that results is still perfectly rational. It does not drop us into anarchy or chaos (Midgley, 2002, p. 82).

We have to see the different maps as answering different kinds of question, questions which arise from different angles in different contexts. … The plurality that results is still perfectly rational. It does not drop us into anarchy or chaos (Midgley, 2002, p. 82).